|

Akreditasi – Fakultas Teknik UNDIPHASIL DAN PERINGKAT AKREDITASI PROGRAM STUDI S1 DAN D3 FAKULTAS TEKNIK UNDIP. Pengumuman Registrasi Administratif Mahasiswa Lama Program Studi Magister Linguistik Undip Semester Gasal T.A. Akreditasi Program Studi. Program Studi Magister Linguistik sudah terakreditasi BAN-PT dengan hasil.Teknik Perkapalan Undip menjuarai Mechanical Fair 2013 Universitas Diponegoro. Semarang, perkapalan.undip.ac.id. Program Studi Teknik Perkapalan Universitas Diponegoro Gedung Kuliah Bersama Teknik Perkapalan Lt 1. Akreditasi Program Studi Oseanografi 2015. Semarang, Segenap Civitas Akademika Fakultas Perikanan dan ilmu Kelautan Universitas Diponegoro mengucapkan selamat dan sukses atas diraihnya akreditasi “A” pada Program Studi. Sertifikat Akreditasi Program Studi. Sertifikat program Diploma. Workshop & Pelatihan Workshop EJurnal Undip 16 Maret 2016. Kebijakan Akreditasi Jurnal On Line dan Teknik Jurnal On Line; Modul Pelatihan Eprints Undip 2016. Workshop EJurnal Undip 16 Maret 2016; Kebijakan Akreditasi Jurnal On Line dan Teknik Jurnal On Line. Sertifikat Akreditasi Program Studi. Sertifikat Akreditasi Program Pasca Sarjana; Sertifikat Akreditasi Program Sarjana.

Akreditasi Program Studi di ITB : Alamat Kontak & Promosi . Berdasarkan Data Badan Akreditasi Nasional – Perguruan Tinggi (BAN-PT) per bulan Oktober 2014. Peringkat Akreditasi Nasional. Mahasiswa Undip yang tergabung dalam Unit Kegiatan Selam 387 Universitas Diponegoro (UKSA-387 UNDIP). Pada periode ini terdapat tambahan 1 program studi baru Spesialis Bedah Syaraf. Akreditasi Program Studi S1 Undip downloads at Ebook-kings.com - Download free pdf files,ebooks and documents - Ketua - file-ft.undip.ac.id.

0 Comments

I know this question has been asked earlier and I am sorry to take up this question slot but I am confused regarding rebuilding indexes. If I am interpreting it correctly, you don't recommend rebuilding indexes at all. Oracle business intelligence overview. Slideshare uses cookies to improve functionality and performance, and to provide you with relevant advertising. If you continue browsing the site, you agree to the use of cookies on this website.

Apache Derby (previously distributed as IBM Cloudscape) is a relational database management system (RDBMS) developed by the Apache Software Foundation that can be embedded in Java programs and used for online transaction.See our User Agreement and Privacy Policy. If you continue browsing the site, you agree to the use of cookies on this website. See our Privacy Policy and User Agreement for details. /thumb.jpg)

Oracle business intelligence overview 1. Rajesh NadipalliRev2Jan 2012 [email protected] 2. This is an overview of Oracle’s key BI product OBIEE, formally known as Siebel Analytics. Extended Support Fee for Oracle 11.2.0.4 waived until May 31, 2017 - Extended Support until Dec 2020 By Mike Dietrich-Oracle on Oct 17, 2015. Download white papers for SQL Server in DOC, PDF, and other formats. All the content is free. This page aggregates Microsoft content from multiple sources – feel free to fill in the gaps and add links to other content. Oracle Internals - Recovering Deleted Data. Traditional OS Forensics - Coroners Toolkit, Sleuthkit, Autopsy and Encase. For example To extract unallocated/ deleted data. Aprende a hacer tus maquetas arquitectonicas. Get the full title to continue reading from where you left off, or restart the preview.

El Primer Curso On-Line De Maquetas de Arquitectura Realistas y Ademas un museo Vitual. Las Maquetas Imposibles 1 LAS MAQUETAS IMPOSIBLES Introducci. Maquetas de sistemas: como su nombre lo indica, son la representaci. Por ejemplo: maqueta del sistema solar, del sistema digestivo, de un sistema de riego rural, etc., cada una de. MAQUETAS DE ARQUITECTURA: TECNICAS Y CONSTRUCCION. Posted by : Bufago On : 12/30/2014. Category: ARQUITECTURA. Las maquetas de arquitectura son un puente que se tiende entre las ideas y.

Maquetas urbanlsttcas l(esca,las 1 :11100 '1' 1 .501.

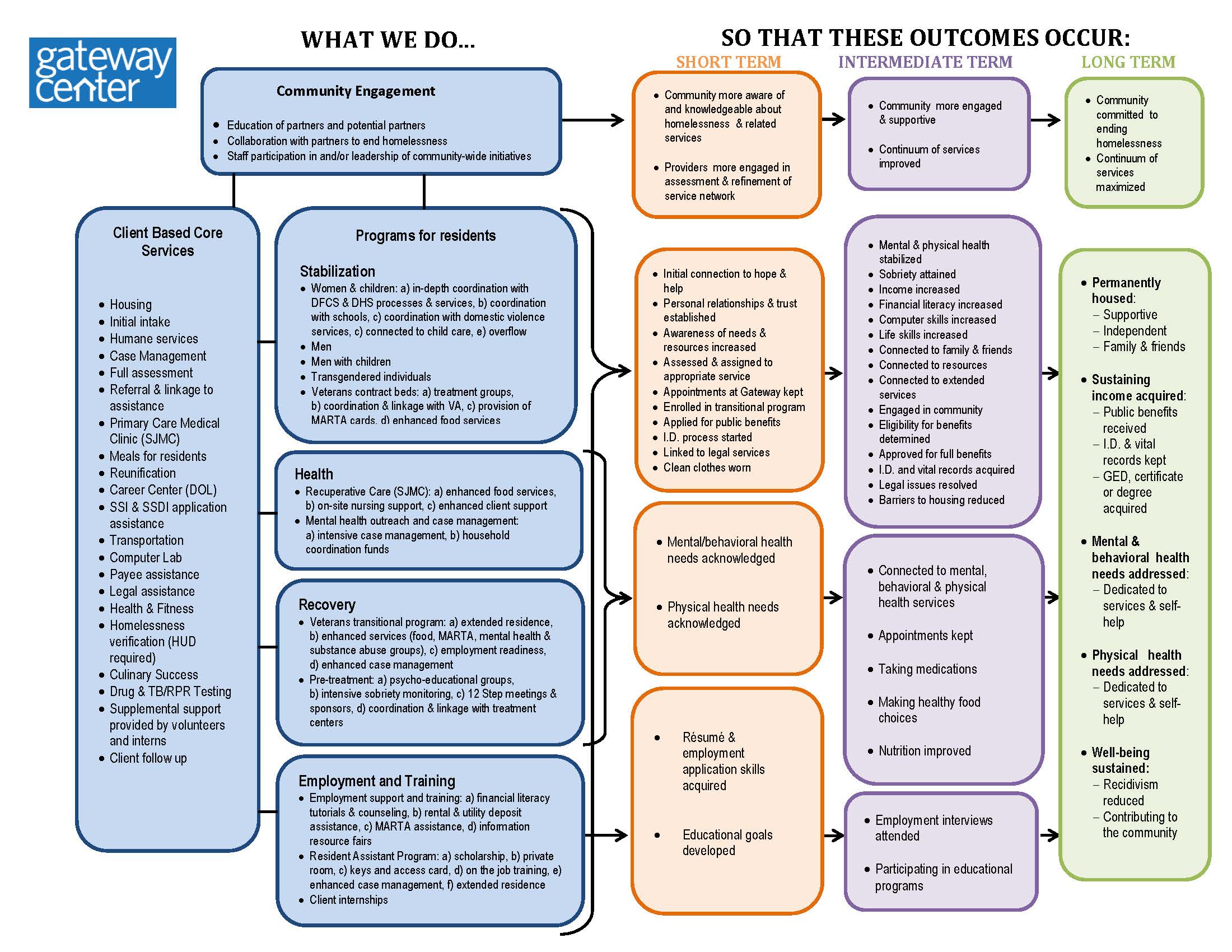

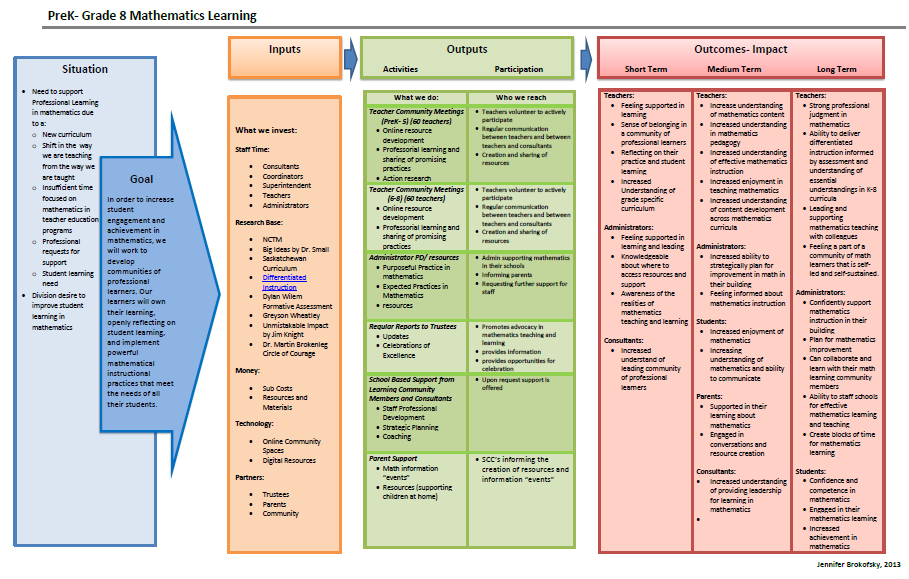

Tags: arquitectura de papel, edificios de papel, maquetas arquitectonicas, pre obras arquitectonicas. Las maquetas arquitect  Program Evaluation Guide - Introduction. What Is Program Evaluation? Most program managers assess the value and impact of their work all the time when they ask questions, consult partners, make assessments, and obtain feedback. They then use the information collected to improve the program. Indeed, such informal assessments fit nicely into a broad definition of evaluation as the . With that in mind, this manual defines program evaluation as . Evaluation should be practical and feasible and conducted within the confines of resources, time, and political context. Define program logic formulation. Program Logic formulation is the study of the properties of propositions and deductive reasoning by. Research on teacher education programs: Logic. The program logic model is defined as a picture of. Towards a Program Logic for JavaScript. The formation of this predicate is subtle and requires some.

Moreover, it should serve a useful purpose, be conducted in an ethical manner, and produce accurate findings. Evaluation findings should be used both to make decisions about program implementation and to improve program effectiveness. Many different questions can be part of a program evaluation, depending on how long the program has been in existence, who is asking the question, and why the information is needed. In general, evaluation questions fall into these groups: Implementation: Were your program. And the question might be answered using approaches characterized as . Other processes include: Planning. Logic Pro X est notre plus puissante version . Arborant une interface moderne, elle int Atmel is a leading manufacturer of microcontrollers and touch technology semiconductors for mobile, automotive, industrial, smart energy, lighting, consumer and home. Planning asks, . Many programs do this by creating, monitoring, and reporting results for a small set of markers and milestones of program progress. The early steps in the program evaluation approach (such as logic modeling) clarify these relationships, making the link between budget and performance easier and more apparent. Surveillance and Program Evaluation. While the terms surveillance and evaluation are often used interchangeably, each makes a distinctive contribution to a program, and it is important to clarify their different purposes. Surveillance is the continuous monitoring or routine data collection on various factors (e.

Surveillance systems have existing resources and infrastructure. Data gathered by surveillance systems are invaluable for performance measurement and program evaluation, especially of longer term and population- based outcomes. In addition, these data serve an important function in program planning and . There are limits, however, to how useful surveillance data can be for evaluators. For example, some surveillance systems such as the Behavioral Risk Factor Surveillance System (BRFSS), Youth Tobacco Survey (YTS), and Youth Risk Behavior Survey (YRBS) can measure changes in large populations, but have insufficient sample sizes to detect changes in outcomes for more targeted programs or interventions. Also, these surveillance systems may have limited flexibility to add questions for a particular program evaluation. In the best of all worlds, surveillance and evaluation are companion processes that can be conducted simultaneously. Evaluation may supplement surveillance data by providing tailored information to answer specific questions about a program. Data from specific questions for an evaluation are more flexible than surveillance and may allow program areas to be assessed in greater depth. For example, a state may supplement surveillance information with detailed surveys to evaluate how well a program was implemented and the impact of a program on participants. Evaluators can also use qualitative methods (e. Research and Program Evaluation. Both research and program evaluation make important contributions to the body of knowledge, but fundamental differences in the purpose of research and the purpose of evaluation mean that good program evaluation need not always follow an academic research model. Even though some of these differences have tended to break down as research tends toward increasingly participatory models . Research is generally thought of as requiring a controlled environment or control groups. In field settings directed at prevention and control of a public health problem, this is seldom realistic. Of the ten concepts contrasted in the table, the last three are especially worth noting. Unlike pure academic research models, program evaluation acknowledges and incorporates differences in values and perspectives from the start, may address many questions besides attribution, and tends to produce results for varied audiences. While push or pull can motivate a program to conduct good evaluations, program evaluation efforts are more likely to be sustained when staff see the results as useful information that can help them do their jobs better. Data gathered during evaluation enable managers and staff to create the best possible programs, to learn from mistakes, to make modifications as needed, to monitor progress toward program goals, and to judge the success of the program in achieving its short- term, intermediate, and long- term outcomes. Most public health programs aim to change behavior in one or more target groups and to create an environment that reinforces sustained adoption of these changes, with the intention that changes in environments and behaviors will prevent and control diseases and injuries. Through evaluation, you can track these changes and, with careful evaluation designs, assess the effectiveness and impact of a particular program, intervention, or strategy in producing these changes. Recognizing the importance of evaluation in public health practice and the need for appropriate methods, the World Health Organization (WHO) established the Working Group on Health Promotion Evaluation. The Working Group prepared a set of conclusions and related recommendations to guide policymakers and practitioners. In 1. 99. 9, CDC published Framework for Program Evaluation in Public Health and some related recommendations. To maximize the chances evaluation results will be used, you need to create a . You determine the market by focusing evaluations on questions that are most salient, relevant, and important. You ensure the best evaluation focus by understanding where the questions fit into the full landscape of your program description, and especially by ensuring that you have identified and engaged stakeholders who care about these questions and want to take action on the results. The steps in the CDC Framework are informed by a set of standards for evaluation. The 3. 0 standards cluster into four groups: Utility: Who needs the evaluation results? Will the evaluation provide relevant information in a timely manner for them? Feasibility: Are the planned evaluation activities realistic given the time, resources, and expertise at hand? Propriety: Does the evaluation protect the rights of individuals and protect the welfare of those involved? Does it engage those most directly affected by the program and changes in the program, such as participants or the surrounding community? Accuracy: Will the evaluation produce findings that are valid and reliable, given the needs of those who will use the results? Sometimes the standards broaden your exploration of choices. Often, they help reduce the options at each step to a manageable number. For example, in the step ? Asking these same kinds of questions as you approach evidence gathering will help identify ones what will be most useful, feasible, proper, and accurate for this evaluation at this time. Thus, the CDC Framework approach supports the fundamental insight that there is no such thing as the right program evaluation. Rather, over the life of a program, any number of evaluations may be appropriate, depending on the situation. The preferred approach is to choose an evaluation team that includes internal program staff, external stakeholders, and possibly consultants or contractors with evaluation expertise. An initial step in the formation of a team is to decide who will be responsible for planning and implementing evaluation activities. One program staff person should be selected as the lead evaluator to coordinate program efforts. This person should be responsible for evaluation activities, including planning and budgeting for evaluation, developing program objectives, addressing data collection needs, reporting findings, and working with consultants. The lead evaluator is ultimately responsible for engaging stakeholders, consultants, and other collaborators who bring the skills and interests needed to plan and conduct the evaluation. Characteristics of a Good Evaluator. Experience in the type of evaluation needed. Comfortable with quantitative data sources and analysis. Able to work with a wide variety of stakeholders, including representatives of target populations. Can develop innovative approaches to evaluation while considering the realities affecting a program (e. Incorporates evaluation into all program activities. Understands both the potential benefits and risks of evaluation. Educates program personnel in designing and conducting the evaluation. Will give staff the full findings (i. Although this staff person should have the skills necessary to competently coordinate evaluation activities, he or she can choose to look elsewhere for technical expertise to design and implement specific tasks. However, developing in- house evaluation expertise and capacity is a beneficial goal for most public health organizations. Of the characteristics of a good evaluator listed in the text box below, the evaluator. The lead evaluator should be willing and able to draw out and reconcile differences in values and standards among stakeholders and to work with knowledgeable stakeholder representatives in designing and conducting the evaluation. Seek additional evaluation expertise in programs within the health department, through external partners (e. CDC. External consultants can provide high levels of evaluation expertise from an objective point of view. Important factors to consider when selecting consultants are their level of professional training, experience, and ability to meet your needs. Overall, it is important to find a consultant whose approach to evaluation, background, and training best fit your program. Be sure to check all references carefully before you enter into a contract with any consultant. |

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

August 2017

Categories |

RSS Feed

RSS Feed